Now that we've started doing a better job on this blog of providing you a glimpse at how our development and design processes work here at HubSpot, it's time we shed some light on the design half of the equation. We've recently overhauled our product navigation menu, so we thought this would make an excellent case study for looking at how customer research and testing have helped us move in the right direction, and move quickly.

Rapid, Customer-driven Development At HubSpot

First off, let's be clear. "Customer-driven" and "rapid" development can and should work together. An argument that you often hear against taking the time to do user interviews and testing is that it slows down the development process. As folks like Steve Krug have been preaching for quite a while now, you can get great, directional results from user testing very quickly and from just a few sessions. And taking the time to confirm your direction early on will save you a lot more time than rewriting the application after the fact when you realize your customers simply don't want what you've built.

In this case, we needed to examine the basic organization of our applications and the way navigation worked in the product. This had historically been an area of great debate; back in the distant past, the decision was made to organize the applications based on the Inbound Marketing methodology. This style of organization within the product helped to communicate the benefits of Inbound Marketing, but it increasingly made organizing our growing menu of applications much more difficult.

You Gotta Have Goals

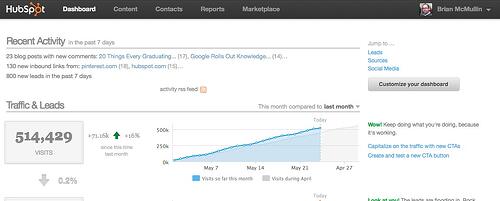

The goal of the navigation redesign project was to make the product more usable. In the user testing lab, we'd measure this with things like completion rate and the amount of time it took users to complete key tasks. In the wild, we'd be looking at how we could increase overall app usage, how we might increase our NPS score, and how we might decrease the number of customer support cases related to finding applications and moving around within the product.

As a development team, we already spend a lot of time speaking with our customers to help drive our development decisions. We also rely heavily on feedback from our internal stakeholders -- our very own supercharged Marketing team and the Support and Services folks who work hand-in-hand with our customers every day. Our User Experience team, led by the illustrious Josh Porter (@Bokardo), organizes these interviews and tests, and then quickly transforms what we've learned into flows and designs for rapid iteration.

In the case of our navigation menu, we knew that there was plenty of room for improvement, so we started with...

Support And Services As The Front Line

Our Support and Services teams are awesome. No, really. They are awesome. These are *not* the folks that you talk to when you dial up your favorite cable or mobile companies for support. We hire a very special type of talented, charismatic person for these roles, and they consistently receive massive kudos in our surveys and NPS polls. They are also our best source of feedback for the wants and needs of our customers.

For the navigation question, we just walked over to the Support and Services teams here in the office in Cambridge and asked what their experiences had been with customers using the old navigation. Did customers get lost easily in the product? Were there support cases logged due to the issue? Was there general confusion when trying to navigate the product? In this case, it was "yes," "yes," and "yes."

In roughly thirty minutes of conversation with these teams, we confirmed that we had a real product usability problem, which led us to look at…

Our Marketing Team As Lead Customer

Okay. So our marketing team is kind of a big deal. They put up some pretty huge numbers every month when it comes to lead generation. And they are generally the experts when it comes to things like website design, social media, and email marketing. So naturally we listen to them when they say they want something in the product. Or, more precisely, we beg them for their feedback when we have a new product idea.

For this navigation design project, our first stop was figuring out what our own marketing team would expect in the product. To do this, we employed an open card sorting test, letting our guinea pigs on the Marketing team organize the product applications and app components in a way that would make the product most useful to them. From this exercise, we got a much clearer sense of how many app units we had on our hands, as well as some initial direction on overall groupings.

This important, directional step took about thirty minutes each, for about four separate tests. Our next step was to confirm our Marketing team's inclinations with some actual customer data, so…

Let's Talk To Some Customers!

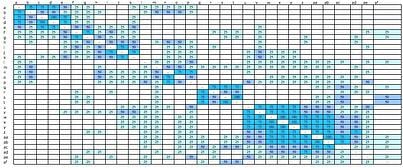

To constrain things a bit further, we cut the number of cards down to about twenty and focused more on the higher level groupings. Using screenshare conference calls, we tested four customers remotely for roughly thirty minutes each, asking them to organize the twenty cards into groups that would be the most intuitive and useful to them. From this testing, we looked at how frequently items were grouped together (darker blues in grid below) and saw some key groupings arise: task-based groupings, object-based groupings, and hub-oriented groupings.

Now which grouping is actually most "usable?"

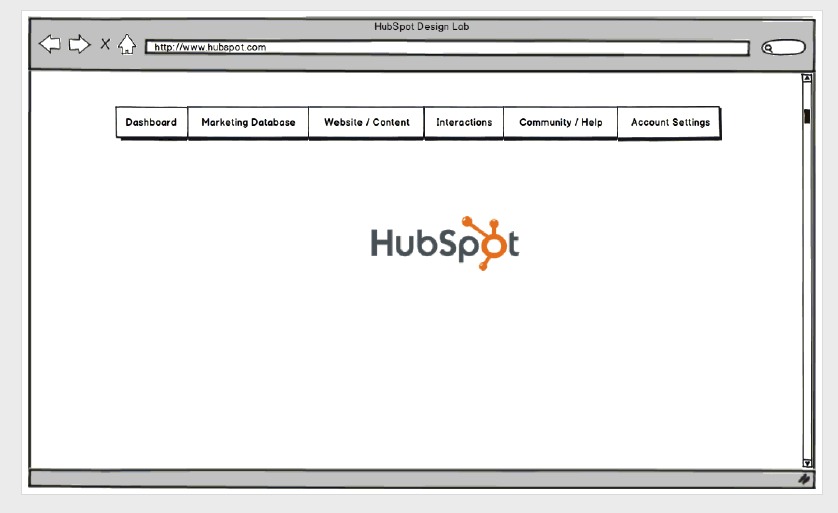

From those three layouts (plus the existing product navigation), we quickly created some low-fidelity mocks and scheduled some final usability testing. For this round of testing, we wanted to get both customer and prospective customer feedback. This would help us allow for the fact that our customers had been "trained" on the product and had most likely become accustomed to the layout. Again using remote conferencing, we tested three customers and three non-customers by asking them where they would go to complete some key tasks within the product, taking roughly twenty minutes per test.

The result of this last test pretty conclusively suggested that an object-based approach would yield the most intuitive design. What was fascinating was that none of the test subjects, even existing customers who had been using the product and had embraced inbound marketing, organized the applications by the methodology. This confirmed our intuition and helped guide our thinking of how to organize the product applications as a whole.

Fast and Furious Customer-Driven Development

From soup to nuts, the testing process took about 6.5 hours of testing time to give us new and actionable information about a pretty darn important user experience decision in our product. We feel confident that the change will have a positive impact on usability, and will reduce customer support cases related to finding applications in the product. As of today, we're launching this new navigation to a handful of beta customers and will be gathering feedback. If all goes well, we'll open up the change to our entire install base in a couple of weeks. Since data is part of our DNA here, we'll continue to monitor our metrics against our stated goals to make sure we're moving in the right direction.